Setting up Hostinger as a Gateway to Your Home‑Server Ollama (GPU‑Enabled)

4 minutes ago

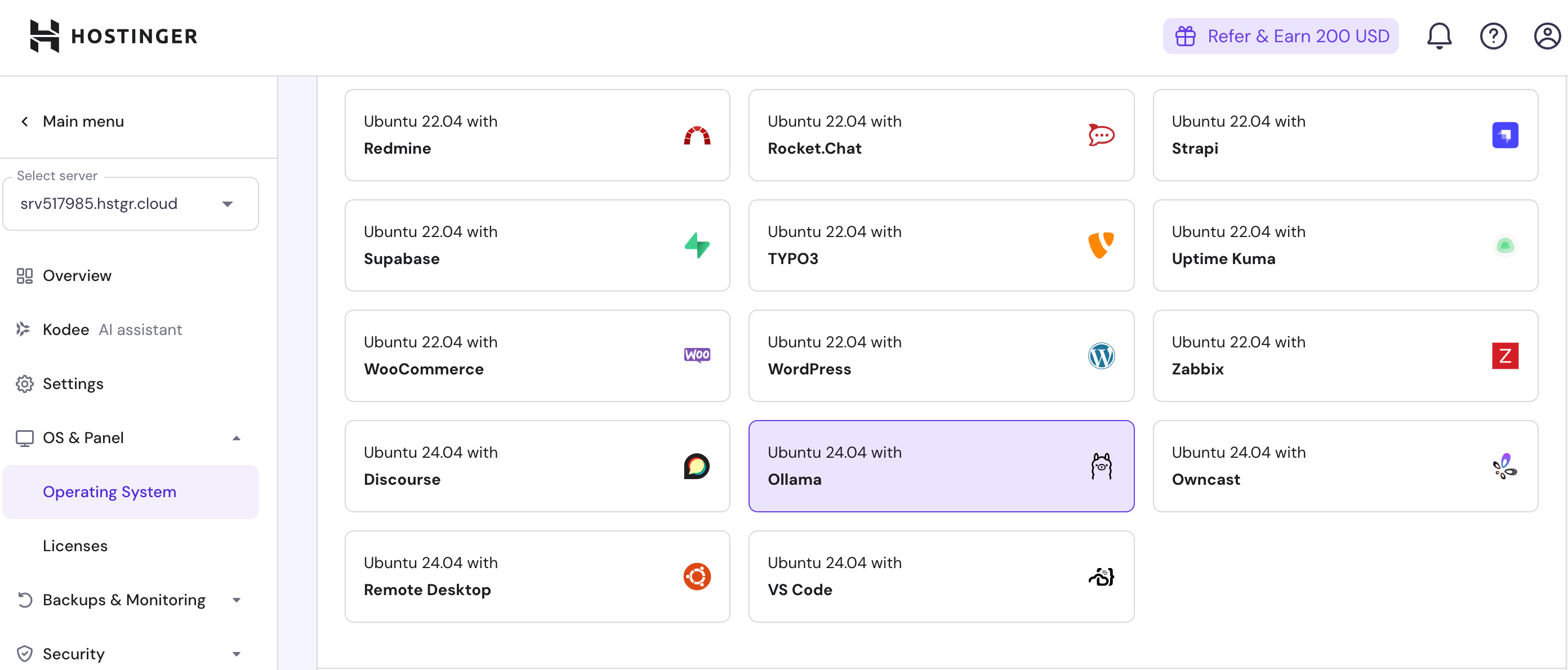

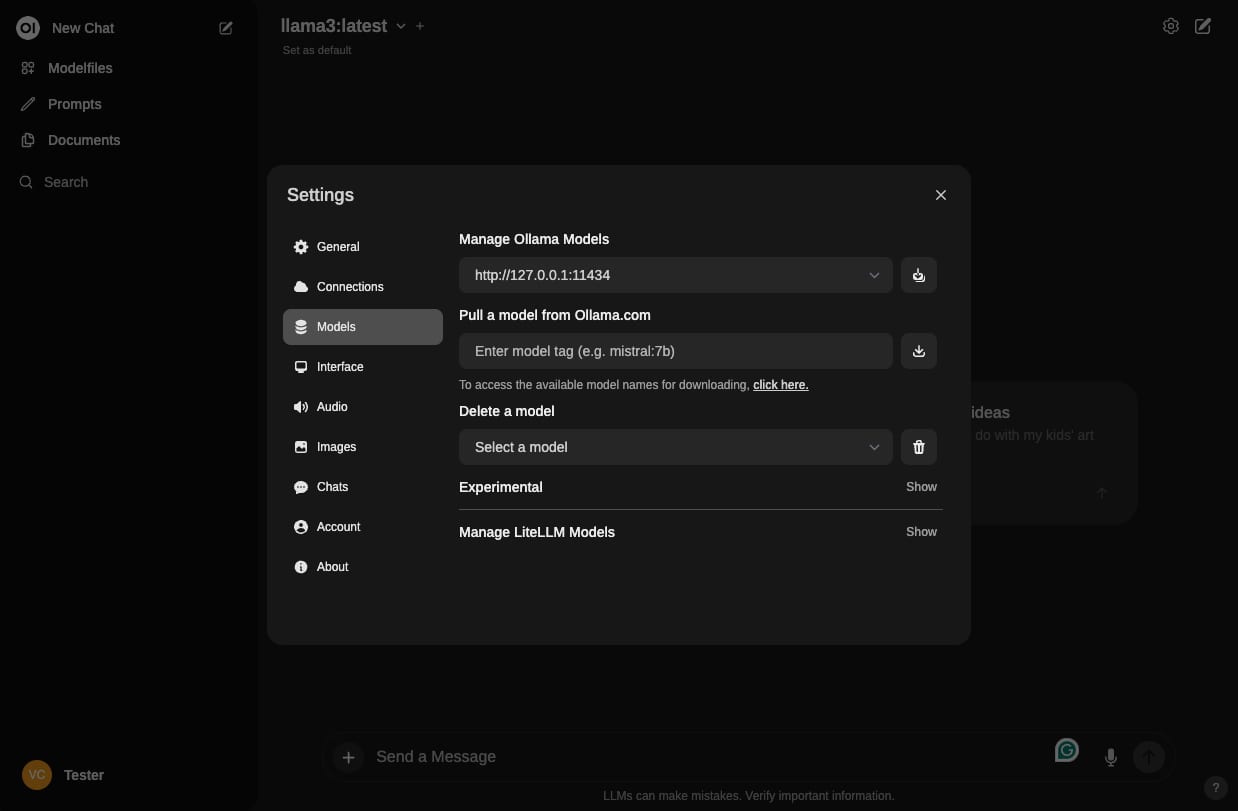

I have KVM 2 - n8n - Ubuntu 24.04 on Hostinger. I want to access and use Ollama with my GPU on my home server. Hostinger has Traefik but it is not configured. I want Hostinger to be my gateway.

Traefik Application Proxy

Effortlessly expose your microservices to the internet.

PURCHASE SUPPORT

- Open Source

- Ingress Controller + Reverse Proxy + Load balancer

- Services Auto-Discovery

- HTTP/2/3, TCP, UDP, gRPC, Websockets

- Lightweight & Cloud-Native

- Real-time Metrics & Distributed Tracing

- Public Plugins with Rich Catalog

- Ingress-View Dashboard

- Canary Deployments

- Default Ingress in SUSE Rancher K3s

- Default Ingress in Nutanix NKP

New!

- Supports Docker Swarm, Hashicorp Nomad, Azure Service Fabric, K8s Distros, Virtual Machines, & Bare Metal

- Web Application Firewall (WAF)

- OpenTelemetry Metrics, Traces, & Logs

Sources

Answer

Setting up Hostinger as a Gateway to Your Home‑Server Ollama (GPU‑Enabled)

Below is a step‑by‑step guide that turns your Hostinger KVM (Ubuntu 24.04) into a secure reverse‑proxy gateway for an Ollama instance running on your home server. The workflow uses Traefik on Hostinger, a VPN/SSH tunnel (or Cloudflare Tunnel) to expose the home server, and Docker‑Compose for easy deployment.

1. Overview of the Architecture

| Component | Role | Key Configuration |

|---|---|---|

| Hostinger VM | Edge router & TLS termination | Traefik container, Docker network |

| Home Server | GPU‑powered Ollama | Docker container, GPU drivers, SSH/Cloudflare Tunnel |

| n8n | Workflow automation | Calls Ollama API via Hostinger domain |

| DNS | Public access | Subdomain pointing to Hostinger IP |

The goal is to expose https://ollama.yourdomain.com (or any sub‑domain) that forwards traffic to the Ollama API on your home machine, while keeping the GPU workload local.

2. Prepare the Home Server (GPU + Ollama)

- Install GPU drivers & CUDA

- Follow the vendor’s instructions for your GPU (NVIDIA, AMD, etc.).

- Verify with

nvidia-smior equivalent.

- Install Docker & Docker‑Compose

bash sudo apt update && sudo apt install -y docker.io docker-compose sudo systemctl enable --now docker - Run Ollama with GPU support

bash docker run -d --gpus all --name ollama \ -p 11434:11434 \ -v ~/.ollama:/root/.ollama \ ollama/ollama:latest--gpus allgives the container access to all GPUs.- Port

11434is the default Ollama API port.

- Test locally

bash curl http://localhost:11434/api/tags

You should see a JSON list of available models.

3. Expose the Home Server to the Internet

Option A: SSH Reverse Tunnel (Simple & Secure)

ssh -R 11434:localhost:11434 user@hostinger_ip

- Keep the session alive (e.g.,

autossh). - The tunnel forwards Hostinger’s port

11434to your home server.

Option B: Cloudflare Tunnel (Zero‑Trust)

- Install Cloudflare Tunnel on the home server:

bash curl -L https://github.com/cloudflare/cloudflared/releases/latest/download/cloudflared-linux-amd64 -o cloudflared sudo mv cloudflared /usr/local/bin/ sudo chmod +x /usr/local/bin/cloudflared - Authenticate and create a tunnel:

bash cloudflared tunnel login cloudflared tunnel create ollama-tunnel cloudflared tunnel route dns ollama-tunnel ollama.yourdomain.com - Run the tunnel:

bash cloudflared tunnel run ollama-tunnel

The tunnel will expose https://ollama.yourdomain.com directly to your home server.

4. Install and Configure Traefik on Hostinger

4.1. Create a Docker Network

docker network create traefik-net

4.2. Traefik Docker‑Compose

Create docker-compose.yml on Hostinger:

version: "3.8"

services:

traefik:

image: traefik:v3.0

container_name: traefik

command:

- "--api.insecure=true"

- "--providers.docker=true"

- "--providers.docker.exposedbydefault=false"

- "--entrypoints.web.address=:80"

- "--entrypoints.websecure.address=:443"

- "--certificatesresolvers.le.acme.tlschallenge=true"

- "--certificatesresolvers.le.acme.email=you@example.com"

- "--certificatesresolvers.le.acme.storage=/letsencrypt/acme.json"

ports:

- "80:80"

- "443:443"

volumes:

- "/var/run/docker.sock:/var/run/docker.sock:ro"

- "./letsencrypt:/letsencrypt"

networks:

- traefik-net

labels:

- "traefik.enable=true"

networks:

traefik-net:

external: true

Traefik will automatically discover containers that expose the traefik.enable=true label.

4.3. Create a Reverse‑Proxy Service for Ollama

Add a second service to the same docker-compose.yml:

ollama-proxy:

image: nginx:stable-alpine

container_name: ollama-proxy

restart: unless-stopped

networks:

- traefik-net

labels:

- "traefik.enable=true"

- "traefik.http.routers.ollama.rule=Host(\`ollama.yourdomain.com\`)"

- "traefik.http.routers.ollama.entrypoints=websecure"

- "traefik.http.routers.ollama.tls.certresolver=le"

- "traefik.http.services.ollama.loadbalancer.server.port=11434"

environment:

- TARGET_HOST=hostinger_ip

- TARGET_PORT=11434

volumes:

- ./nginx.conf:/etc/nginx/conf.d/default.conf:ro

nginx.conf (simple reverse proxy):

server {

listen 11434;

location / {

proxy_pass http://hostinger_ip:11434;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

}

}

Replace hostinger_ip with the actual IP of your Hostinger VM.

4.4. Deploy

docker-compose up -d

Traefik will now expose https://ollama.yourdomain.com and forward traffic to the ollama-proxy container, which in turn forwards to the SSH tunnel or Cloudflare Tunnel on Hostinger.

5. Configure n8n to Use Ollama

- In your n8n workflow, add an HTTP Request node.

- Set the URL to

https://ollama.yourdomain.com/api/chat(or the appropriate Ollama endpoint). - Use the Basic Auth or Bearer Token if you enabled authentication on the proxy.

- Pass the JSON payload expected by Ollama (model, prompt, etc.).

6. Security & Maintenance Tips

| Item | Recommendation |

|---|---|

| TLS | Traefik’s Let’s Encrypt resolver automatically renews certificates. |

| Firewall | On Hostinger, block all inbound ports except 80/443. |

| SSH Tunnel | Use autossh to keep the tunnel alive. |

| Cloudflare Tunnel | Enables zero‑trust access and automatic TLS termination. |

| GPU Monitoring | Use nvidia-smi or docker stats to monitor GPU usage. |

| Logs | Check Traefik logs (docker logs traefik) for routing issues. |

7. Troubleshooting Checklist

| Symptom | Likely Cause | Fix |

|---|---|---|

curl https://ollama.yourdomain.com/api/tags returns 502 |

Proxy mis‑configured | Verify TARGET_HOST/TARGET_PORT and SSH tunnel. |

| GPU not used | Container lacks GPU access | Re‑run Ollama with --gpus all. |

| Traefik shows “no matching router” | DNS not pointing to Hostinger | Update DNS A record. |

| Let’s Encrypt fails | Email or storage path wrong | Check acme.json permissions (chmod 600). |

| n8n node fails | Wrong endpoint or auth | Verify URL and headers in n8n. |

8. Summary

- Home Server: install GPU drivers, run Ollama with

--gpus all. - Expose the Ollama port via SSH reverse tunnel or Cloudflare Tunnel.

- Hostinger: set up Traefik (Docker‑Compose) and an Nginx reverse‑proxy container.

- n8n: point to the Hostinger sub‑domain to call Ollama.

With this setup, all LLM inference stays on your home GPU, while Hostinger handles TLS termination, routing, and acts as a single point of entry for your workflows.

Happy automating!

Related

Could you walk me through setting up an SSH reverse tunnel from my home server to Hostinger, including how to use autossh to keep the tunnel alive and what firewall rules I need to adjust on both ends?

What are the exact steps to configure a Cloudflare Tunnel for my Ollama instance, and how does its zero‑trust model compare to a plain SSH reverse tunnel in terms of latency, security, and ease of maintenance?

How can I add basic authentication or OAuth to the Traefik reverse proxy so that only authorized users can access the Ollama endpoint, and what configuration changes are required in the Docker‑Compose file?

What tools and commands should I use to monitor GPU usage and memory consumption while Ollama is running inside Docker, and how can I set up alerts if the GPU usage exceeds a certain threshold?

If my n8n workflow still fails to reach the Ollama API after configuring the gateway, what specific logs or diagnostic steps should I check on Hostinger, the home server, and within the n8n node to pinpoint the issue?

Copilot